The Role of Competing Objectives in Exercise Design and Evaluation

As an exercise practitioner with experience in different types and levels of exercises, I question the efficacy of our existing exercise evaluation paradigms (e.g., HSEEP, REPP, CSEPP, etc.). In my experience, they are messy and misaligned with the overarching objectives we are trying to achieve.

This messiness is partly due to the fact that we create dueling objectives such as training and evaluation. For example, if you slow down or modify an exercise to ensure responders understand and can perform their duties (training objective), can you objectively state the system capability they performed was successfully tested (performance objective)? I have a hard time saying this unless the capability's performance...

As an exercise practitioner with experience in different types and levels of exercises, I question the efficacy of our existing exercise evaluation paradigms (e.g., HSEEP, REPP, CSEPP, etc.). In my experience, they are messy and misaligned with the overarching objectives we are trying to achieve.

The Problem with Exercise Objectives

This messiness is partly due to the fact that we create dueling objectives such as training and evaluation. For example, if you slow down or modify an exercise to ensure responders understand and can perform their duties (training objective), can you objectively state the system capability they performed was successfully tested (performance objective)? I have a hard time saying this unless the capability's performance is duplicated in more challenging and realistic environments.

Additionally, the limitations of time and budget influence the desire to maximize the value of each exercise conducted. Running an exercise, especially a large-scale/multi-organizational exercise that is more realistic of a full-blown response, is not an easy task. It requires many personnel from different organizations and disciplines to agree on the objectives of the exercise. Reconciling these objectives into a refined set of clear objectives is a tough task by itself.

The resulting objectives, though, must also be considered collectively to understand if and how they may compete with each other. For example, the most valuable learning and evaluation for system performance comes from understanding and examining the relationships between different plans and activities, not the plans and activities themselves. As a result, additional objectives surrounding plan testing should be carefully considered in order to ensure a coherent exercise design that will produce the desired behavior so it can be effectively evaluated.

The Four Types of Objectives

There are four types of objectives to understand in order to ensure the exercise design is aligned with your evaluation framework:

- Training Objectives seek to improve the knowledge, skills and abilities of single person or group. The objective type is often encountered in tabletop exercises and drills where the goals are to familiarize people with plans, procedures, and equipment.

- Task/Activity Objectives seek to demonstrate and verify the knowledge, skills, and abilities of a single person or group. The task or activity being performed can be the execution of a plan; however, the objective is for a person or group to demonstrate competence in that plan, not validate the plan itself.

- Plan/Procedure Objectives seek to validate a plan and its assumptions. These objectives focus on learning about the plan itself. The successful execution of the plan, though, does not automatically indicate the plan will actually make the organization better prepared. That objective is better assessed in system performance objectives.

- System Performance Objectives seek to determine if the response system met the needs of the constituents it serves. These objectives reflect the interaction effects between different plans, processes, actions, tasks, etc. that supported the response. These objectives can include both internal (e.g., EOC fulfilling fire department resource needs) and external (e.g., setting up two field hospitals) constituents.

All objectives are needed and no objective is more noble than the other. As a systems engineer, though, system performance is the most intriguing objective type that has the greatest potential to help understand what it means to be prepared. There is much more work to be done in this area. For now, a good exercise is one that enables the performance of the behavior under observation AND the evaluation of established objectives.

Exercise Design and Evaluation

Exercise design and evaluation can be significantly improved with an understanding of how objectives are different and potentially in competition with each other. For example, you may design the exercise and your evaluation materials to evaluate workforce competence rather than capability performance if you want to validate training success. If you want to validate a plan, then your evaluation will reflect the nuances of that plan and it's assumptions.

While the post-exercise evaluation process with root cause analysis and other techniques can help identify and deconflict important issues on the back-end, this should not be relied upon. What happens if you find one prevailing incident that affects your assessment of other objectives you are trying to evaluate? Sure you may pull out some marginal benefit (e.g., small lessons learned and areas for improvement), but are you able to better understand and significantly improve your overall operations with such limited data? In my experience, no.

Parting Thoughts...

Exercises with their complexity and high costs need to do more than provide marginal benefits. This starts with ensuring exercise objectives are well-aligned and do not conflict with each other.

Additionally, existing exercise design and evaluation frameworks need to acknowledge that competing objectives occur and incorporate a deconfliction process to ensure the value of the exercise is maximized before the exercise is designed and conducted. The supporting exercise evaluation material then need to reflect the objectives being sought. Evaluation material should not simply be based on high level core capabilities and may require multiple "types" of evaluation material that address the different types of objectives.

Have you experienced competing exercise objectives? What happened and what did you do?

Evaluating Preparedness at Different Levels of Analysis

I recently conducted an academic/practice-based research project that provided a better understanding of preparedness evaluation. One interesting thing to come out of this research was a capabilities-based exercise framework for a Federal regulatory agency. I will be posting an overview of this research once it is officially published.

Another interesting aspect of the research confirmed how preparedness evaluation is still a complicated and difficult process that doesn't always yield the best results. We still have many...

I recently conducted an academic/practice-based research project that provided a better understanding of preparedness evaluation. One interesting thing to come out of this research was a capabilities-based exercise framework for a Federal regulatory agency that links capabilities to design concepts to evaluation criteria. I will post an overview of this research once it is officially published.

Another interesting aspect of the research confirmed how preparedness evaluation is still a complicated and difficult process that doesn't always yield the best results. We still have many more questions than answers when trying to link evaluation to our learning and preparedness objectives. For example, how do you understand how much "more" prepared you are between exercises and disasters? Current assessment processes tends to be ad hoc and very subjective.

HSEEP, noble in intent and still a benefit, lays the ground work for evaluating preparedness. However, it stops short of a well-aligned and pragmatic process that helps us learn from each exercise or disaster response while also tracking cumulative learning over time. Many of the issues and gaps mentioned above are still experienced in the after action and corrective action processes following exercises and disasters.

It is often mystifying how we develop and track our findings. For example, are these findings really the most important? Are they the right set of findings? How do we capture andarticulate very real complexity issues such as network or interaction effects? Are we investing in certain performance capabilities when we should be rethinking how the system is designed in the first place?

There are no simple answers to these questions, but below are a few different dimensions of capabilities to think about when evaluating your exercises or disaster responses. These dimensions can also be considered "levels of analysis" in research parlance. However, don't be confused by "levels"; there is no hierarchical relationship between them.

Individual

Individual ability is the backbone of a good disaster response. While I share that people should not be singled out for poor performance, understanding responders' overall knowledge, skills and abilities is an important level of analysis that needs to be captured. You should be asking: In order to have performed better during the exercise or response, what knowledge, skills or abilities should the responders had prior to the event? You may also separate individuals into different groups by role level such as senior leadership, management/coordination, tactical; or by function such as medical, fire, mass care, etc. The goal in this level of analysis is to improve individual abilities.

Team/Organizational

To put it bluntly, well-organized groupings of people make things happen. They are organized by specialty or by organization and bring resources and capabilities that are much needed in a response. Understanding team or organizational capabilities helps to identify critical response gaps that are needed in future disasters. You should be asking: What were the organizational or team capabilities that contributed to the success or failure of the exercise or disaster response? Why/How? Were there any that weren't used? Redundant? Ill-planned or -defined? This level of analysis is important for ensuring your "group" is ready to respond with the capabilities needed in the future. However, you must also think critically about other capabilities that may also be needed in the future and for which there is no precedent.

System

The system is the least understood, but in my opinion, most important level of analysis. The difference between this and the team/organizational level of analysis is best captured in the following statement: Just because you got the job done doesn't mean getting to that point was easy, efficient or effective. This level of analysis addresses the complexity of a multi-agency response and requires a different set of questions to investigate.

For example, when you look back at all the different entities that supported the response, how did it go? Where did breakdowns occur? How was the coordination and information sharing across people and organizations? If you are tackling these questions, you are on the right track. I would add that you should also think about the impact of information asymmetry, how the network as a whole performed and the cascading effects of different decisions or actions. Understanding this level helps you question the efficacy of your response system so that you may improve preparedness at a more fundamental/global level.

I anticipate many of you will intuitively understand the levels of analysis that I just articulated. You may have even experienced these issues first hand as I have over my many years developing and evaluating exercises. This post is meant to help frame your thinking, but unfortunately it won't provide a definitive answer on anything.

However, I look forward to your thoughts and opinions on this! What have been your experiences with preparedness evaluation? What do you find most problematic? Have you identified any best practices?

UPCOMING: National Information Sharing Exercise

Information sharing exercises are rare and hard to put on, but are important to learning about how to improve information sharing in disasters.

I am passing on this information about an upcoming information sharing exercise. Participation is open to many different organizations in the EM community and I encourage your to sign up and participate as soon as possible. The exercise will take place on May 11, 2016.

Below are the details that were provided to me:

Information sharing exercises are rare and hard to put on, but are important to learning about how to improve information sharing in disasters.

I am passing on this information about an upcoming information sharing exercise. Participation is open to many different organizations in the EM community and I encourage your to sign up and participate as soon as possible. The exercise will take place on May 11, 2016.

Below are the details that were provided to me:

"In May 2016, the National Information Sharing Consortium (NISC) will conduct CHECKPOINT 16, a virtual tabletop exercise that will allow participants to test, evaluate, and download for daily use various model web applications, tools, and data models for situational awareness and decision support. Dozens of organizations have signed up to participate in CHECKPOINT 16, with participants coming from state, local, and Federal government, non-profits, private sector companies, and academia. Participants can choose their level of participation, from being an observer, participating in a limited way using NISC-provided tools, to being a full-play participant integrating CHECKPOINT 16 tools into their own native operating environment throughout the exercise.

The exercise will take place from 11 am to 4 pm ET on May 11, 2016. For information on the exercise and to register, you can visit www.checkpoint16.org. So far the NISC has conducted two training events for the exercise, and these trainings can be viewed on the checkpoint 16 webpage; the next event will take place on April 21."

Exercising Social Media - Review of and Q&A with EMSocialSimulation

I was recently able to talk with both Corey Mulryan and Kyle McPhee from Hagerty Consulting, a well-known and fast growing emergency management consulting firm. Corey and Kyle have been leading an effort at Hagerty to develop a new social media exercise tool called EMSocialSimulation. This blog post contains a review of the tool as well as Hagerty's Q&A responses that provide additional information.

EMSocialSimulation is a great social media simulation tool geared toward organizations and jurisdictions looking to train and exercise on beginner to intermediate social media capabilities at an affordable price. I was impressed...

I was recently able to talk with both Corey Mulryan and Kyle McPhee from Hagerty Consulting, a well-known and fast growing emergency management consulting firm. Corey and Kyle have been leading an effort at Hagerty to develop a new social media exercise tool called EMSocialSimulation. This blog post contains a review of the tool as well as Hagerty's Q&A responses that provide additional information.

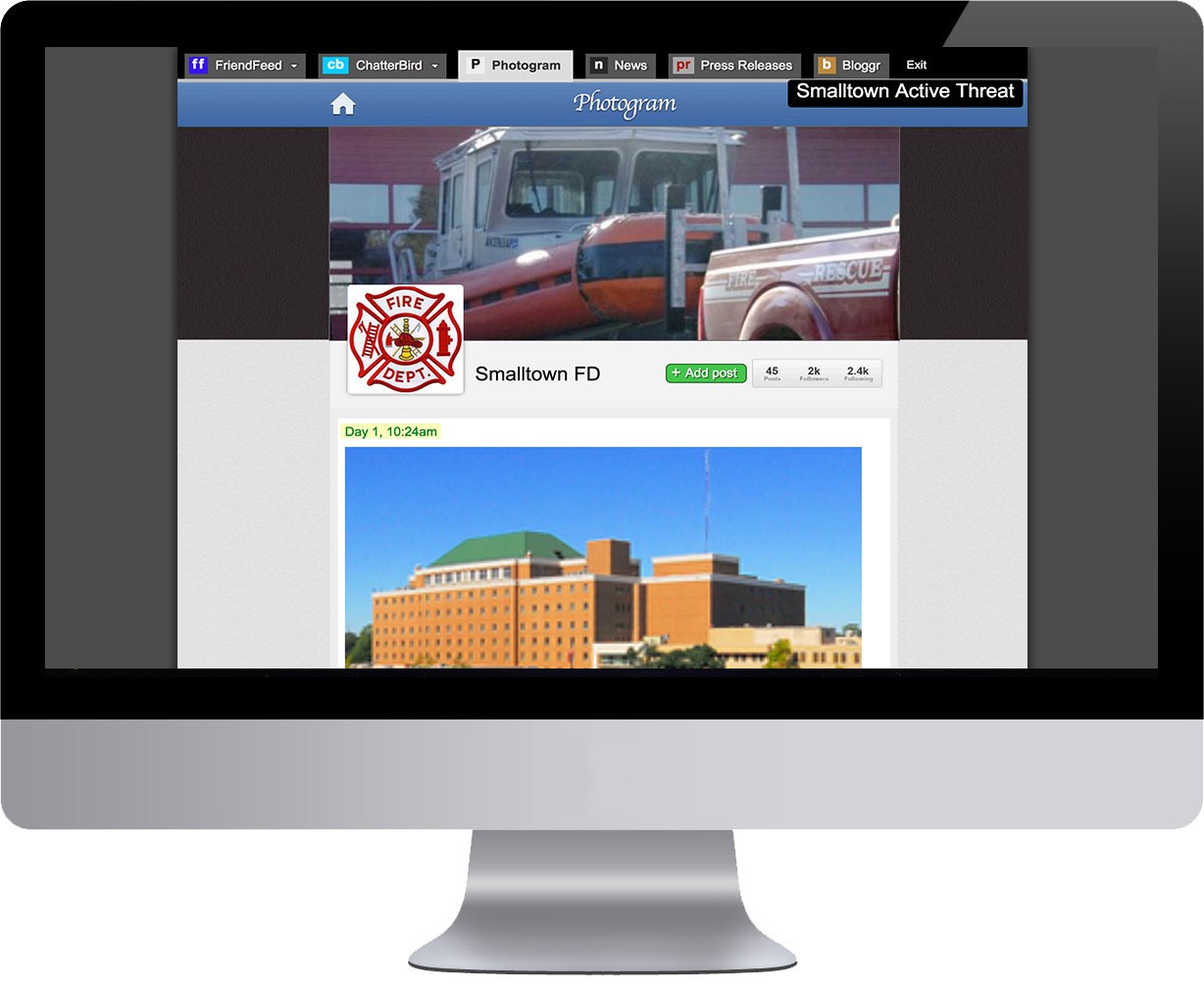

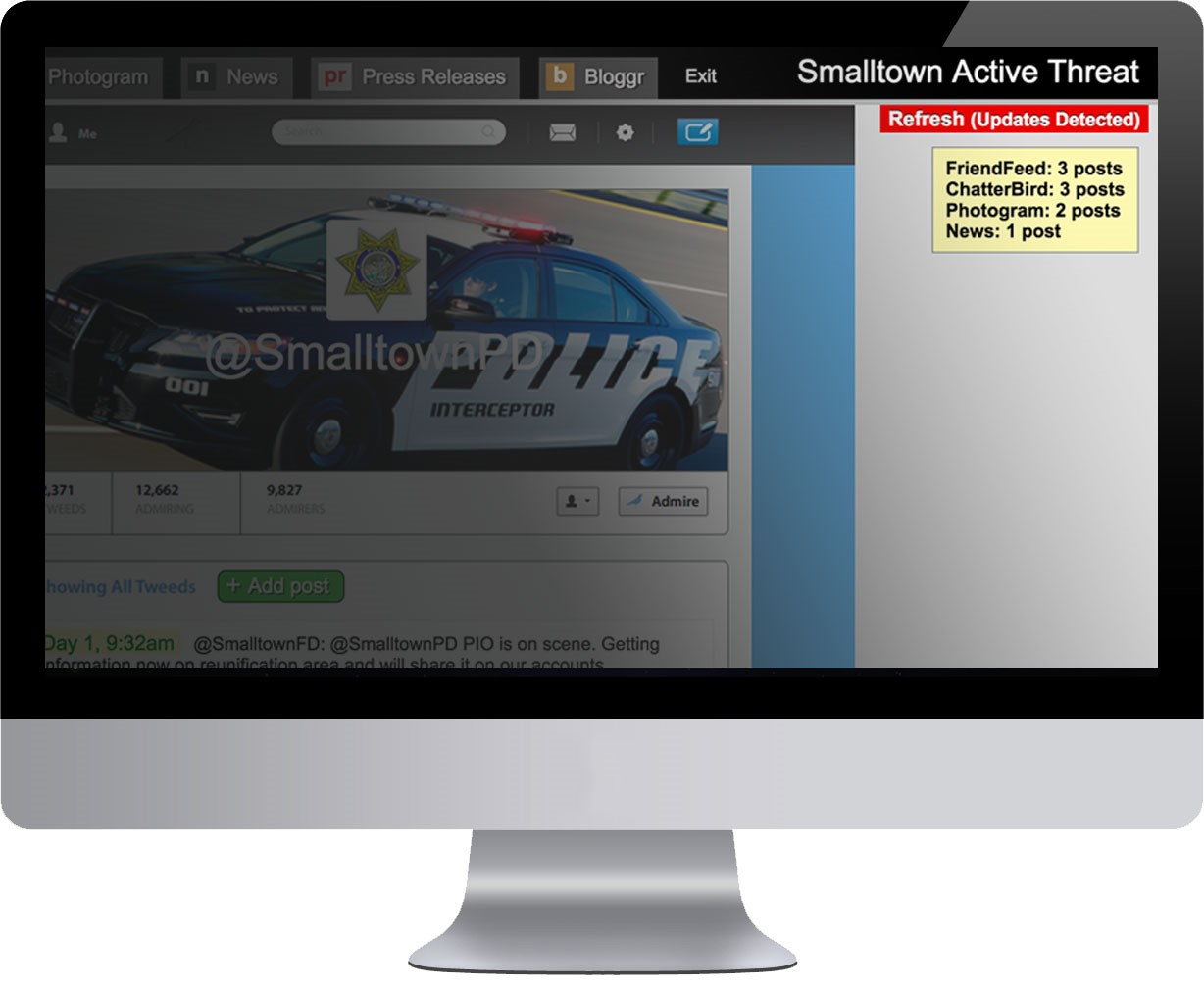

EMSocialSimulation is a great social media simulation tool geared toward organizations and jurisdictions looking to train and exercise on beginner to intermediate social media capabilities at an affordable price. I was impressed by how well the whittled down the feature set to not overwhelm users as well as exercise planners. This is perhaps a significant advantage over incumbents such SocialSimulator, Conductrr, Polpeo and SimulationDeck that offer more advanced features and controls, but can easily become overwhelming to a novice social media organization.

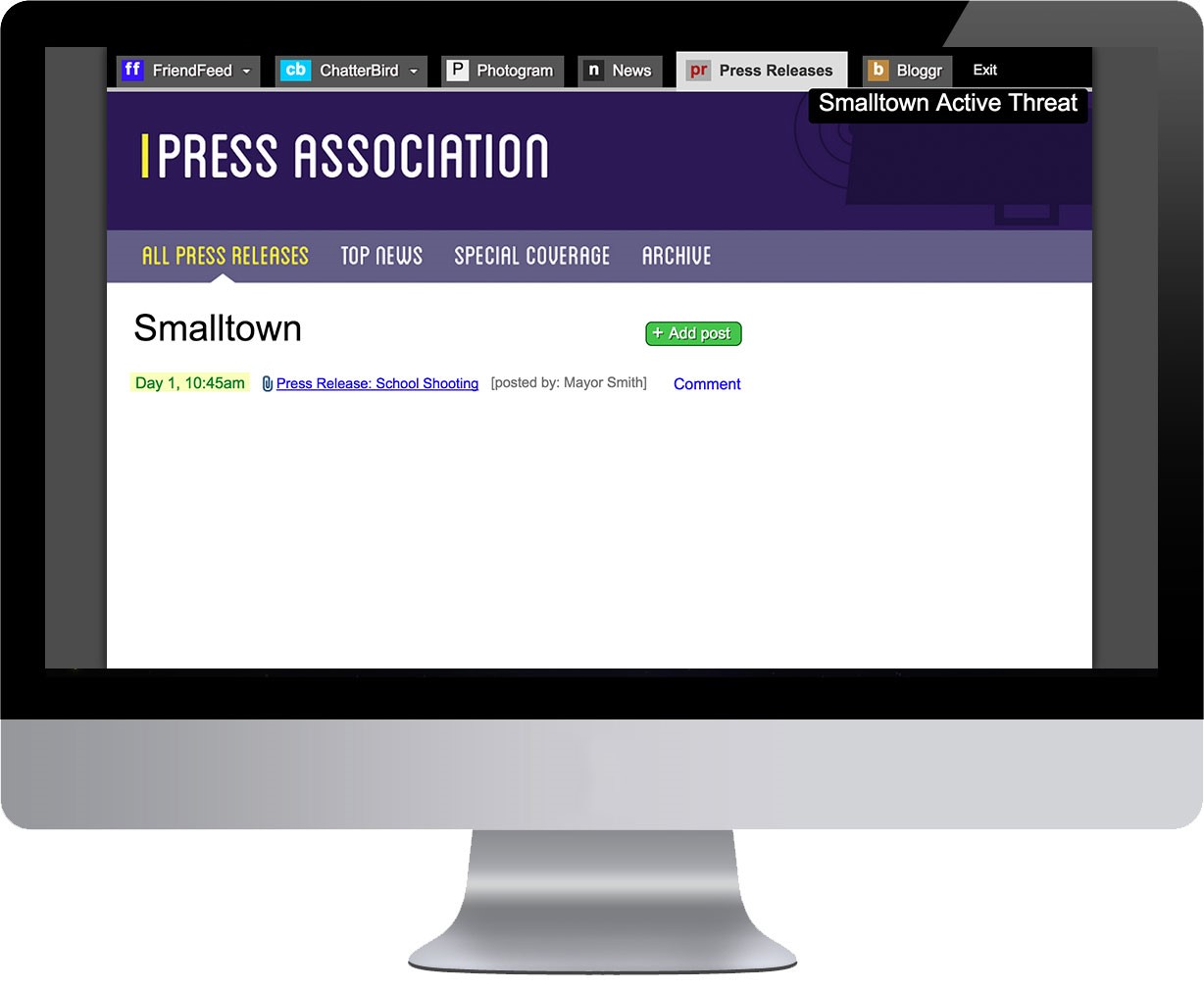

If you want to test different types of social media accounts such as all the Twitter accounts in your jurisdiction, EMSocialSimulation makes it easy to create multiple accounts on each simulated social media platform. It has accounts for FriendFeed (Facebook), ChatterBird (Twitter), Photogram (Instagram), News (Simulated Media Posts), and Press Releases.

Many of you in the public sector may be asking if the tool is aligned with HSEEP as you may be required to follow this methodology. Because EMSocialSimulation is just a simulation platform (not a methodology), this is the wrong question to be asking. EMSocialSimulation merely helps you execute HSEEP designed and developed exercises or other types of exercises related to social media. As such, it is aligned with HSEEP, but is certainly not an HSEEP methodology tool. You would plan an exercise just like you would normally and then use EMSocialSimulation to simulate the social media capabilities under the Public Information / Public Affairs emergency support function.

Hagerty has mostly been using EMSocialSimulation to support their existing clients and as a result is not as self-service oriented I would have liked to see it, especially as it has been in use for over twelve months. For example, you still have to go through a sales process with them and have them bulk upload injects, unless you want to do each one by hand. They also have inject templates available and are creating more for different hazards and scenarios; but again, you must still go through your Simulation Coordinator in order for these templates to be accessed and uploaded.

The video below walks you through EMSocialSimulation as well as Hagerty's social media exercise design and development process.

I am excited to see, though, that they allow the export of all simulation data for analysis. Most of the data can be analyzed by someone with some basic Excel data and analytic skills. However, Hagerty will also provide this type of analysis as a service. One data point they capture that might be useful is the response time between when a message was posted vs. responded to. This can help you identify if response times are within your guidelines or help you determine if there was a problem with the social media response timing as a whole.

Overall, I would recommend this tool to organizations and jurisdictions just beginning their foray into social media messaging and response. I would not say this is your Cadillac platform for social media simulation and it won't scale to thousands of tweets easily. But it should suffice and be all that is needed for many in the emergency management community. Just don't be scared off by the design of the tool looking like it was built in 2005. It still has the power you need it to have.

In the comments below, I would interested in knowing your social media training and exercise challenges? What has been your experience with other tools?

I also asked Corey to answer some additional background questions you might finding interesting:

What is your name and role in the Organization?

My name is Corey Mulryan and I am the Simulation Coordinator and simulation content developer for EMSocialSimulation (EMSS). I work with clients to develop the simulation, and if needed, help run the application on the day of the exercise.

What does your tool do?

EMSS helps users practice the use of social media in a realistic, safe, and secure environment. EMSS helps identify gaps in plans and operational structures where internal policies and procedures. Finally, EMSS is scalable, which results in cost effectiveness and customization.

What/who inspired you to create this?

Social media is here to stay and its role in emergency management has become a matter of expectation, not the exception. As an example, a client of ours asked if there was a way that we could incorporate social media into their exercise. We questioned our typical response and that sparked the innovation. We researched current solutions and found that they were not meeting the need. Some examples observed included low tech options like sending a fax or email with simulated social media messages or creating private accounts on live systems, but that is not realistic and is risky, not to mention a lot of work for our clients. Other high tech solutions seemed overdone, expensive, and outside of clients reach.

The Hagerty team started discussing social media and that its inclusion should be the standard, the same way we don’t think twice about following or including Homeland Security Exercise Evaluation Program (HSEEP) in every exercise. We wanted to provide our clients with an opportunity to practice social media, familiarize themselves with process and approach, and see how social media could be incorporated into what they do during normal operations and response.

What challenge does your tool help customers overcome?

Emergency Managers and first responders know they should be using social media. The University of San Francisco’s 2013 study on using social media identified that “80% of Americans expect emergency response agencies to monitor and respond on social media platforms, additionally, 33% expect help to show up within just 60 minutes of a post.”

Those numbers are a wake-up call. A public expectation has been established and EMSS helps meet this challenge by training staff and demonstrating to management the benefits of using social media in disaster response. During Hurricane Sandy, “the American Red Cross had 23 staffers monitor over 2.5M Sandy-related social media posts.” Agencies cannot hope that one person will be able to handle social media alone, EMSS allows them to cross train multiple staff members to build knowledge and trust within an agency. EMSS allows players to remain engaged during a longer exercise with a steady flow of information that we try to keep interesting.

How does it do this?

EMSS hosts replicas of common social media platforms that can be customized based on the objectives of the engagement. Players are able to make posts and comment in a way that looks and feels real. Of course, all of this is controlled in a secure environment by the Simulation Controller. EMSS does not link to any social media platforms so there is zero chance that any messages sent or posted during a simulation will end up on an agency’s actual accounts. The Simulation Controller will develop injects to test various responses from the players. This can be issuing information, responding to requests, monitoring traffic, or anything else. The Simulation Controller will then upload injects in accordance with the exercise timeline and be able to respond to players in real time. After the simulation is complete, the Simulation Controller can export the data to an After-Action Report/Improvement Plan.

What is next for the tool?

This tool can really help people, we have seen it firsthand. We want to continue to help users experience social media in their training and exercise endeavors, and to bring the whole concept to life. We want this to foster the development of internal procedures and integration of social media into communications standards. We want to continue this process and work to better identify how social media is used to monitor situations, communicate with residents and stakeholders, share information, and reach the whole community. EMSS has been used geographically across the country, with events ranging from a tabletop to a full-scale exercise. This tool is available for an affordable price and can include multiple stakeholders or formats, such as training, exercise, or simulation.

How can people get in touch, learn more or test the tool?

If anyone is interested in EMSS they can go to our website for more information www.emsocialsimulation.com. On the website they can fill out the contact us form at the bottom or send an email directly to emsocialsim@hagertyconsulting.com. We will quickly respond and schedule a demo and discuss the application.

LaunchPAD - A New Exercise Delivery Tool + Q&A with the Founder

I was recently able to sit down with Robert Burton, Founder and Managing Director of Preparedex, LLC. The company recently launched a virtual exercise delivery platform called LaunchPAD. There are very limited technology options to support preparedness exercises, so I was very excited to learn more about LaunchPAD, especially given my background putting together large-scale exercises for almost four years.

LaunchPAD hones in on a very important issue, geographically...

I was recently able to sit down with Robert Burton, Founder and Managing Director of Preparedex, LLC. The company recently launched a virtual exercise delivery platform called LaunchPAD. There are very limited technology options to support preparedness exercises, so I was very excited to learn more about LaunchPAD, especially given my background putting together large-scale exercises for almost four years.

LaunchPAD hones in on a very important issue, geographically distributed exercises that are hard to coordinate and execute. With this in mind, they have developed LaunchPAD with a "virtual" focus in mind. The platform will deliver a pre-developed scenario (along with appropriate injects and events) to a virtual audience anywhere in the world. In this way, various distributed teams can work together toward the common goal(s) of the preparedness exercise. This can be more cost-effective for each exercise and be more realistic when teams are not all operating out of the same location. If you are interested in the platform, you will probably find a solution that isn't very robust, but does solve this very important problem.

Also, because HSEEP is a flexible exercise design and development approach, I think this platform would likely fit within the policy doctrine for different types of drills, tabletop and functional exercises. LaunchPAD is designed to help you with both exercise delivery and running an exercise program with many exercises each year. Right now, Preparedex is focusing on building a good system rather meeting doctrinal requirements, though. This may be a plus in the long run and enhance learning and evaluation.

The platform is fairly user friendly and they have some new features they want to release in the next year, including self-service for scenario and inject import and product registration/sign-up. However, because the platform just launched, it is light on native features. In the mean time, they provide a variety of exercise consulting and support services to enhance the platform. They are also planning to create an affiliate program for consultants and others that want to help market the platform and use it in their own consulting practices.

In the comments below, I would interested in knowing your exercise pain points and how technology can help with exercises. Also, do you use an existing product? If so, what are its strengths and weaknesses.

I also asked Robert to answer some additional background questions you might finding interesting.

What does LaunchPAD do?

LaunchPAD helps organizations and governments manage their crisis, emergency, security and business continuity exercise programs. It streamlines the exercise design process and delivers highly realistic crisis scenarios to geographically dispersed teams.

What/who inspired you to create LaunchPAD?

We originally came up with this idea based on a client that had to complete several emergency and security exercises over a six-week period in order to receive their operating license. In 2009, Canaport LNG, a large liquefied natural gas terminal, had various regulators and public safety officials that required them to conduct a tabletop, functional and full-scale exercise prior to officially unloading the first LNG tanker. Pressure also came from the public as well as from many internal and external stakeholders. The major issue was scheduling all the different stakeholders to be at the various exercises over a short period of time. We decided to put all three exercises online and allow approved stakeholders access so they could be a part of the validation process. This is when LaunchPAD was born. Several regulators and public safety officials commented on how easy and effective it was to access LaunchPAD and see the progression of the exercises. It was at that point in time that we realized that we were onto something and decided to develop the product further.

What challenges do you help your customers overcome?

LaunchPAD helps in a number of areas. Scenario planning should be customized to meet a set of specific objectives during each exercise. Many organizations will use the same power point deck over and over. This might be a storm or other scenario with bulleted text and limited visuals which never engages continuants. With the LaunchPAD enhancement services (videos, audio, simulated social media and other customized visuals) participants get involved in the exercise as it’s more realistic – and challenging, as an actual crisis would be. Another benefit is that LaunchPAD stores all the scenario so it can be repurposed to get new team members up to speed or used as a reminder for current teams or individuals. Logging team or individual responses is another feature we added to the application to allow users the ability to record their responses to the various injects that move the scenario forward and print out a PDF at the end of the session. There are many other benefits.

What is next for Preparedex/LaunchPAD?

We recently launched a number of eLearning courses through our online learning platform. These courses provide our clients as well as individuals the opportunity to educate their employees on an ongoing basis from anywhere in the world. This cost-effective training option provides anyone the opportunity to educate on a variety of specialist crisis, emergency, security and business continuity subjects without having to travel. We believe, and data shows, that online training and educational platforms like LaunchPAD are increasing due to the ongoing growth of the internet. We are already becoming known as the “exercise specialists” and want to continue that trend.

How can people get in touch and learn more?

We have two main websites: www.preparedex.net is where you will find our digital solutions and www.preparedex.com is our main corporate site.

My contact information is: rob.burton@preparedex.com Office: +1.401.236.1363 x714

We provide regular crisis simulation exercise and related articles via our newsletter – The Crisis Preparedness Resource Newsletter