I was recently asked this question by a colleague. I didn't have a full answer at the ready, so I thought about it some more.

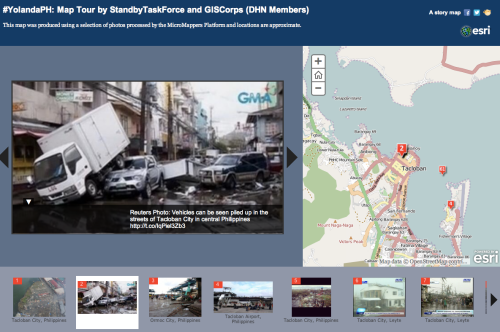

Crisis mapping is usually conducted with the aim of producing "maps" that have key geographic data relevant to a response. According to Wikipedia,

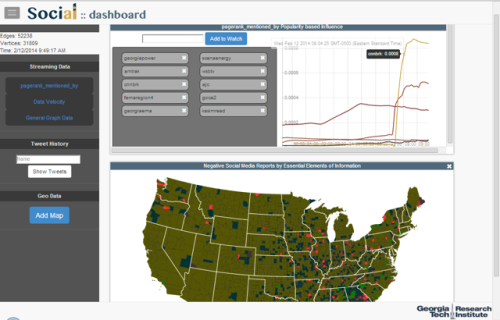

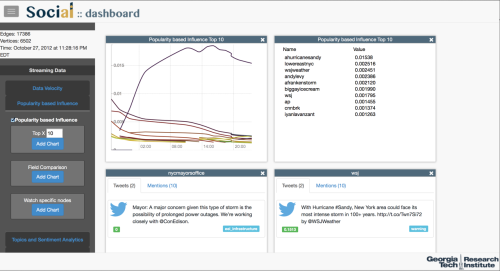

Crisis mapping is the real-time gathering, display and analysis of data during a crisis, usually a natural disaster or social/political conflict (violence, elections, etc.)."

So why is crisis mapping so popular?To understand the popularity, we have to look at when "mapping" was first popularized in the Haiti Earthquake on January 12, 2010. Prior to Haiti, crisis mapping did exist, but primarily with the resources and motivations of National Geographic only.

To support the response effort, a group of "mappers" no where near the earthquake used an open source tool called Ushahidi to begin mapping tweets and other information collected from the Internet to provide better situational awareness. At one point, Craig Fugate, the Adminstrator of FEMA, praised the Haiti Crisis map as "the most comprehensive and up-to-date map available."

https://twitter.com/CraigatFEMA/status/8082286205

The "crisismappers," as they became known after, though, were just a group of unaffiliated and spontaneous volunteers. Most had no prior mapping or GIS experience. They worked independent of any one authority to produce maps that would be useful to on-the-ground responders and coordinators.

Ushahidi was designed around the needs of a consumer and a problem, not a list of technical requirements given to them by an organization. As a result, the software was developed for non-technical people to use. This enabled people not formally trained in mapping and GIS to support mapping efforts and launched a slew of publicity for Ushahidi as the go-to crisis mapping tool.

Of course, as with every platform, each has its limitations. Still, Ushahidi has worked hard in recent years to improve the software and even released a hosted version called CrowdMap. Similarly, other tools such as MapBox have devoted considerable effort to developing easy-to-use mapping tools.

However, easy-to-use tools while important, are not the only reasons for the popularity of crisis mapping.

Consumerization

This "consumerization" of technology is now enabling mapping to shift from an EOC support function to a skill of the modern emergency manager. Without the support of a technical specialist, emergency managers can begin to answer their own questions faster and easier through a response. They can get further detailed in their analysis and research to better understand the situation before them.

This was a critical factor in allowing the crisis mappers to utilize Ushahidi during the Haiti response. They were able to easily adjust their work based on the expanding needs of on-the-ground responders without much technical knowledge and support.

Consumer-based technologies help reduce interdependencies, add efficiencies, and enable emergency managers and responders at all levels of the response to take more ownership of their functional area. Emergency managers get to focus on their domain and answer their own questions as the response progress while the GIS specialist is freed up to work on more complex geo-spatial needs applicable to a broader audience. Pretty soon, there will be no need for a GIS specialist as everyone will be a GIS specialist! The skill is becoming commoditized and ubiqitous.

Availability of Data

Getting data from multiple sources is becoming easier and easier as governments and organizations devote more resources to "freeing" data from their closed, antiquated and locked databases. The shift in thinking has moved from protecting all data from outsiders to recognizing the value of certain shared data across different organizations. In the case of Haiti, the crisis mappers were able to pull public data via social media and a special texting shortcode that had implied consent. However, a lot of great data still exists in the silos of organizations.

In early 2011, hired a Chief Digital Officer to help navigate the complex polices that have prevented such access to data before. To help disseminate data, NYC launched an Open Data Portal where you can easily access flood zone, shelter data and fire station locations in a variety of formats. Better yet, you can actually bring this data into your own systems mash up against other data to produce more value-oriented analysis and solutions. Prior data and real-time data need not be mutually exclusive anymore.

The more data that is available, the more you can do. In creating your risk profile, you can easily see and map which of your buildings or offices are in designated flood zones. Have to discharge patients before, during or after a disaster? Check to see if they may be in a designated flood zone prior to discharge so alternative arrangements can be made.

Adoption Costs

I have always said that technology should be intuitive for the person who knows his or her job well. This helps reduce costs in two ways: training and efficiency. If a tool is intuitive, less time and money needs to be spent on learning how to use this tool. Additionally, the more the tool is intuitive and matches the needs of the functional area, the easier it is for the designated person to get his or her job done faster and with less errors.

Ushahidi was designed with quick adoption in mind and enabled the crisis mappers to quickly adopt it as their tool of choice. Little training was needed on the tool itself and the mappers were able to focus more on how to get the data into the system for added value and insights. The simplicity of the system enabled them to work quickly (as humanly possible) without fretting over the large and expansive feature sets and options that bog down so many tools. In a way, Ushahdi was an "expert" system that focused on the best practices in crowdsourcing rather than giving the user all the options in the world.

Conclusion

Crisis mapping, while the popular concept of the day, is well on its well to becoming a defacto skill in the industry. The lessons from crisis mapping are still being extracted, but the rise in popularity has started giving us a blue print for what other technologies should embrace.

We are beginning to better understand how technology is helping us do our jobs better. The easier that tools are and that do their designated function well, the better off we will be in the future as more data becomes available.